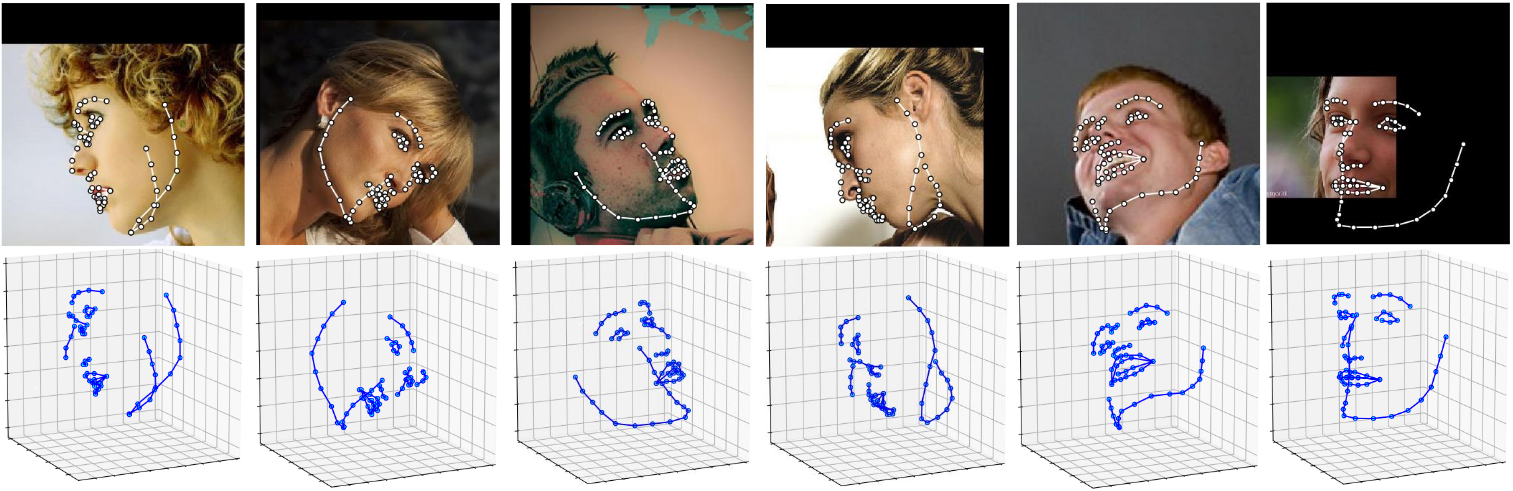

Detect facial landmarks from Python using the world's most accurate face alignment network, capable of detecting points in both 2D and 3D coordinates.

Build using FAN's state-of-the-art deep learning based face alignment method.

Note: The lua version is available here.

For numerical evaluations it is highly recommended to use the lua version which uses indentical models with the ones evaluated in the paper. More models will be added soon.

import face_alignment

from skimage import io

fa = face_alignment.FaceAlignment(face_alignment.LandmarksType.TWO_D, flip_input=False)

input = io.imread('../test/assets/aflw-test.jpg')

preds = fa.get_landmarks(input)import face_alignment

from skimage import io

fa = face_alignment.FaceAlignment(face_alignment.LandmarksType.THREE_D, flip_input=False)

input = io.imread('../test/assets/aflw-test.jpg')

preds = fa.get_landmarks(input)import face_alignment

from skimage import io

fa = face_alignment.FaceAlignment(face_alignment.LandmarksType.TWO_D, flip_input=False)

preds = fa.get_landmarks_from_directory('../test/assets/')By default the package will use the SFD face detector. Pass face_detector to switch:

import face_alignment

fa = face_alignment.FaceAlignment(face_alignment.LandmarksType.TWO_D, face_detector='sfd')The library supports multiple face detection backends. SFD is the default and most accurate, but slower alternatives like BlazeFace, YuNet, or RetinaFace offer better speed. SCRFD requires the optional onnxruntime package (pip install onnxruntime).

| Detector | face_detector= |

CPU (ms) | MPS (ms) | PyTorch device |

|---|---|---|---|---|

| SFD | 'sfd' |

138.8 | 33.1 | CPU / CUDA / MPS |

| BlazeFace | 'blazeface' |

10.9 | 8.2 | CPU / CUDA / MPS |

| YuNet | 'yunet' |

5.6 | N/A | CPU only (OpenCV DNN) |

| RetinaFace | 'retinaface' |

25.2 | 15.5 | CPU / CUDA / MPS |

| SCRFD | 'scrfd' |

23.1 | N/A | CPU only (ONNX Runtime) |

| dlib (deprecated) | 'dlib' |

— | — | CPU / CUDA |

Timings: detection only, median over 20 runs, single face 450x450 image, Apple M2.

You can also skip detection entirely by passing face_detector='folder', which loads pre-computed bounding boxes from .npy, .t7, or .pth files matching each image filename. This is useful for evaluation with ground truth boxes.

import face_alignment

# BlazeFace back camera model (larger input, better for distant faces)

fa = face_alignment.FaceAlignment(face_alignment.LandmarksType.TWO_D, face_detector='blazeface',

face_detector_kwargs={'back_model': True})

# SCRFD (requires: pip install onnxruntime)

fa = face_alignment.FaceAlignment(face_alignment.LandmarksType.TWO_D, face_detector='scrfd')

# Use pre-computed bounding boxes from files alongside images

fa = face_alignment.FaceAlignment(face_alignment.LandmarksType.TWO_D, face_detector='folder')In order to specify the device (GPU or CPU) on which the code will run one can explicitly pass the device flag.

The landmark network is compiled with torch.compile by default for faster inference. Compilation artifacts are cached to disk, so only the first run is slow (~25s). Pass compile=False to disable.

import torch

import face_alignment

# cuda for CUDA, mps for Apple M GPUs.

fa = face_alignment.FaceAlignment(face_alignment.LandmarksType.TWO_D, dtype=torch.bfloat16, device='cuda')

# Skip compilation for instant startup

fa = face_alignment.FaceAlignment(face_alignment.LandmarksType.TWO_D, device='cpu', compile=False)

# Limit batch size for multi-face images on low-memory GPUs (default: 1)

fa = face_alignment.FaceAlignment(face_alignment.LandmarksType.TWO_D, device='cuda', max_batch_size=8)Please also see the examples folder

- Python 3.9+

- Linux, Windows or macOS

- PyTorch (>=2.0)

While not required, for optimal performance(especially for the detector) it is highly recommended to run the code using a CUDA enabled GPU.

The easiest way to install it is using either pip or conda:

pip install face-alignment

Alternatively, you can build it from source.

Install pytorch and pytorch dependencies. Please check the pytorch readme for this.

git clone https://github.com/1adrianb/face-alignmentpip install -r requirements.txt

pip install .A Dockerfile is provided to build images with cuda support and cudnn. For more instructions about running and building a docker image check the orginal Docker documentation.

docker build -t face-alignment .

While here the work is presented as a black-box, if you want to know more about the intrisecs of the method please check the original paper either on arxiv or my webpage.

All contributions are welcomed. If you encounter any issue (including examples of images where it fails) feel free to open an issue. If you plan to add a new features please open an issue to discuss this prior to making a pull request.

@inproceedings{bulat2017far,

title={How far are we from solving the 2D \& 3D Face Alignment problem? (and a dataset of 230,000 3D facial landmarks)},

author={Bulat, Adrian and Tzimiropoulos, Georgios},

booktitle={International Conference on Computer Vision},

year={2017}

}

For citing dlib, pytorch or any other packages used here please check the original page of their respective authors.

- To the pytorch team for providing such an awesome deeplearning framework

- To my supervisor for his patience and suggestions.

- To all other python developers that made available the rest of the packages used in this repository.